Beyond the Megapixel: Unlocking the Hidden Depth of Android Phone Cameras

In the relentless race for smartphone supremacy, camera specifications have become a primary battleground. We’re bombarded with ever-increasing megapixel counts, complex lens arrays, and astonishing zoom capabilities. While these features are impressive, they only tell part of the story. A quieter, more profound revolution is happening just beneath the surface, one that isn’t about capturing more pixels but about understanding the world in a new dimension: depth. Many modern Android phones are equipped with sophisticated hardware capable of perceiving depth, effectively giving them 3D vision. This capability is the key to unlocking next-generation augmented reality, computational photography, and a host of innovative applications.

However, a significant chasm exists between hardware potential and software reality. The ability for a phone to “see” in 3D is often locked within proprietary systems, inaccessible to the wider developer community. This fragmentation creates a challenging environment, stifling innovation and preventing third-party apps from leveraging the full power of the Android gadgets in our pockets. This article dives deep into the world of depth sensing on Android, exploring the technology, the complex API landscape, and the incredible potential that awaits if these barriers can be overcome.

The Third Dimension: Understanding Camera Depth Sensing

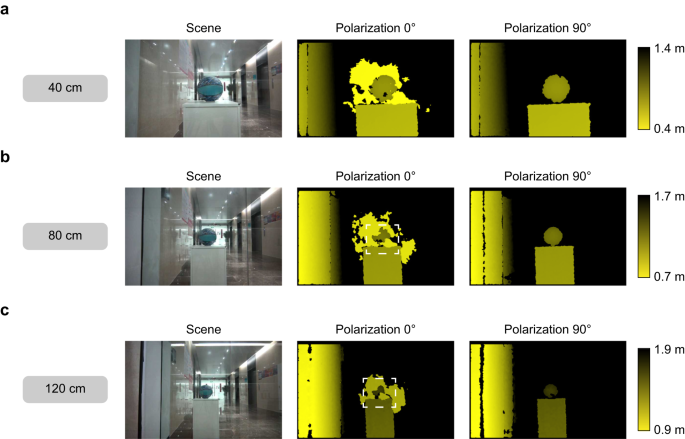

At its core, depth sensing is the process of measuring the distance to various points in a scene from the camera’s perspective. Instead of a flat, two-dimensional image, the camera system generates a “depth map.” This is typically a grayscale image where each pixel’s brightness value corresponds to its distance from the sensor—for instance, white pixels for objects close by and black pixels for those far away. This additional layer of data provides crucial spatial context that a standard RGB image lacks. It’s the difference between a photograph and a rudimentary 3D model of a scene.

How Do Phones “See” in 3D?

Manufacturers have employed several clever technologies to grant Android phones this three-dimensional sight, each with its own strengths and weaknesses:

- Time-of-Flight (ToF) Sensors: This is one of the most accurate methods. A ToF sensor emits a pulse of infrared light and measures the time it takes for the light to bounce off an object and return. Since the speed of light is constant, this “time of flight” can be directly converted into a precise distance measurement. LiDAR (Light Detection and Ranging), famously used by Apple but also present in some high-end Android devices, is an advanced form of ToF that can map entire scenes with high accuracy.

- Stereo Vision: Mimicking human eyesight, this technique uses two or more cameras positioned a short distance apart. By capturing slightly different perspectives of the same scene, the system can analyze the parallax (the apparent shift in position of an object) between the images to triangulate the distance to various points. The wider the baseline between the cameras, the more accurate the depth perception.

- Dual Pixel Autofocus (DPAF): A more software-centric approach, DPAF leverages the phase-detection pixels already present on many modern camera sensors for fast autofocus. Each pixel is essentially composed of two separate photodiodes. By comparing the signals from these two “half-pixels,” the system can create a basic, lower-resolution depth map. While not as precise as a dedicated ToF sensor, it’s a cost-effective way to enable features like portrait mode on a wider range of devices.

From Raw Data to Usable Map

Regardless of the hardware used, the end product is the depth map. This data can be used in real-time for applications like AR or bundled with a traditional photo. The “Dynamic Depth” format, for example, is an open standard proposed by Google for embedding a depth map within a standard JPEG file. This allows for powerful post-processing effects, like adjusting the focal point or the intensity of the background blur long after the picture has been taken.

A Fragmented Ecosystem: The Developer’s Challenge

Having powerful depth-sensing hardware is only half the battle. For the broader app ecosystem to benefit, developers need a standardized, reliable way to access this data. This is where the Android ecosystem’s greatest strength—its diversity—also becomes a significant weakness. Accessing depth data is a journey through a labyrinth of inconsistent APIs and proprietary walls.

The Official Path: Camera2 and CameraX

Google provides two main APIs for camera interaction. Camera2 is the powerful, low-level framework that offers granular control over the camera hardware, intended for pro-level camera apps. CameraX is a more modern Jetpack library built on top of Camera2, designed to simplify development and handle device-specific complexities automatically.

In theory, the Camera2 API has supported depth output for years. It defines specific formats like DEPTH16 and capabilities like REQUEST_AVAILABLE_CAPABILITIES_DEPTH_OUTPUT. A developer should be able to query a device’s camera characteristics, see if it supports depth output, and if so, request a synchronized stream of RGB and depth frames. The reality, however, is starkly different. Many manufacturers that include sophisticated ToF or LiDAR sensors in their Android phones choose not to expose this hardware through the standard Android APIs. The sensor’s data is reserved exclusively for their own native camera app to power its unique portrait modes or special features, creating a competitive moat.

The Proprietary Wall and the ARCore Workaround

This decision to withhold data creates a “proprietary wall.” A developer might know that a top-tier phone has a cutting-edge LiDAR scanner, but their app can only see the standard camera streams, rendering the advanced sensor useless to them. This severely limits third-party innovation in areas like 3D scanning, advanced photo editing, and augmented reality.

To bridge this gap, Google offers ARCore (now called Google Play Services for AR). ARCore is a high-level platform designed specifically for building augmented reality experiences. Crucially, ARCore often has deeper access to the device’s hardware than a standard app. It can fuse data from the camera, IMU (inertial measurement unit), and, if the OEM allows it, the dedicated depth sensors. When a hardware sensor isn’t available or accessible, ARCore can even use sophisticated machine learning algorithms to estimate depth from a single camera (monocular depth estimation). While this is a fantastic tool for AR developers, it’s not a universal solution. It provides depth data processed and optimized for AR, not the raw, high-fidelity stream a photography or 3D modeling app might require.

Beyond Bokeh: What a Depth-Aware Android Could Do

If standardized access to depth data became ubiquitous, it would catalyze a wave of innovation across the app landscape, moving far beyond the simple background blur we see today. The latest Android News often focuses on hardware, but this software key could unlock unprecedented potential.

Revolutionizing Mobile Photography

With a precise depth map tied to every photo, the concept of “editing” would be transformed. Imagine these scenarios:

- Cinematic Relighting: Adjusting the lighting on a subject’s face in post-production. Since the app understands the 3D geometry of the face, it can cast realistic shadows and highlights as if you were moving a light source around in a studio.

- Volumetric Fog Effects: Adding a layer of fog or mist to a landscape photo that realistically wraps around trees and settles in valleys, because the app knows which parts of the scene are further away.

- Accurate Measurements: Turning your camera into a reliable measurement tool, capable of calculating the dimensions of furniture or the area of a room directly from a photograph.

The Next Generation of Augmented Reality

ARCore is already powerful, but direct access to high-quality depth streams would make AR experiences dramatically more immersive and realistic.

- Realistic Occlusion: This is the holy grail of AR. It’s the ability for virtual objects to appear correctly behind real-world objects. With a real-time depth map, an AR character could run behind a real couch, and its body would be correctly hidden from view, seamlessly blending the virtual and physical worlds.

- Physics-Based Interactions: Virtual objects could interact with the real world’s geometry. A virtual ball could bounce realistically off a real table and fall onto the floor, respecting the physical boundaries of the room.

3D Scanning and Content Creation

The dream of easily capturing the world in 3D would become a reality. Users could walk around an object, scan it with their phone, and instantly generate a high-quality 3D model. This has transformative implications for e-commerce (previewing products in your home), game development, 3D printing, and preserving cultural artifacts.

Navigating the Landscape: Tips and Considerations

Given the complex and fragmented state of depth sensing on Android, both developers and consumers need to adopt specific strategies to navigate the market effectively.

For Developers: Best Practices and Pitfalls

- Start with ARCore: For any application involving augmented reality, ARCore is the most robust and reliable starting point. It provides the widest device compatibility and abstracts away many of the underlying hardware inconsistencies.

- Query, Don’t Assume: When using the Camera2 API, never assume a device has depth capabilities. Always query the

CameraCharacteristicsfor depth output support before attempting to configure a depth stream. Gracefully degrade the user experience if it’s not available. - Avoid the Pitfall of Device-Specific Code: It can be tempting to write code that targets a specific flagship model known to have a good depth sensor. This is a maintenance nightmare and goes against the spirit of the Android ecosystem. Stick to standardized APIs whenever possible.

- Monitor the Community: Keep an eye on open-source libraries and developer communities. Often, ambitious projects emerge that attempt to reverse-engineer proprietary SDKs or create unified interfaces for accessing this hidden data.

For Consumers: What to Look For in Android Phones

- Look Beyond the Spec Sheet: A manufacturer advertising a “ToF Sensor” or “3D Depth Camera” does not guarantee that your favorite third-party apps can use it. This marketing often only applies to the phone’s built-in camera software.

- Check ARCore Performance: A great indicator of a well-integrated depth system is strong performance with Google’s ARCore. Read reviews from tech outlets that specifically test AR capabilities. Devices that handle AR occlusion and tracking well are more likely to have accessible and accurate depth data.

- Research App-Specific Support: If you are passionate about a specific use case, like 3D scanning, research which Android gadgets are officially supported by leading apps in that category (e.g., Polycam, Scaniverse). These developers often work directly with manufacturers or target devices with proven, accessible sensors.

Conclusion: A Future in Three Dimensions

The hardware to capture the world in three dimensions is no longer a futuristic concept; it is already present in millions of Android phones. We are at an inflection point where the primary barrier to a new era of spatial computing and computational photography is not a lack of capable silicon, but a lack of software standardization. The fragmented, proprietary approach to depth data access is holding back a wave of potential innovation from a global community of developers.

For Android to truly compete and lead in the next generation of mobile experiences, from the metaverse to advanced assistive technologies, a concerted effort is needed. Whether through new versions of Android, more powerful and consistent CameraX APIs, or pressure from the developer community, unlocking this hidden dimension is paramount. The future of the smartphone camera is not just about capturing a beautiful image, but about understanding the space in which that image was taken. The sooner we have the keys, the sooner we can build that future.