The Invisible Frontier: How Next-Gen Camera Tech is Redefining Android Flagships

Introduction: The Pursuit of the Infinite Display

The smartphone industry is defined by a relentless pursuit of perfection, a cycle of innovation where the goal post is constantly moving. For the better part of a decade, manufacturers of Android Phones have been chasing a singular aesthetic dream: the monolithic, all-screen device. It is a vision of a gadget that is nothing but pure content—a window into the digital world with no bezels, no chins, and, most importantly, no interruptions.

While we have successfully slimmed down bezels to near-invisibility, one stubborn component has remained an obstacle: the front-facing camera. From the wide notches of 2017 to the teardrops of 2018 and the now-ubiquitous punch-hole cutouts, the “selfie” camera has been a necessary blemish on display perfection. However, the landscape of Android News is currently buzzing with indications that the next generation of flagship devices—slated for 2025 and 2026—is poised to finally solve this engineering conundrum.

Recent industry whispers regarding upcoming ultra-premium devices suggest a radical redesign in how front-facing sensors are integrated. We are moving away from the era of “hiding” the camera in plain sight and entering the era of true invisibility. This article delves deep into the technical evolution of Under-Display Camera (UDC) technology, the role of Artificial Intelligence in image reconstruction, and how these advancements will ripple across the entire ecosystem of Android Gadgets.

Section 1: The Evolution of Screen Real Estate

To understand where we are going, we must understand the engineering trajectory of the last few years. The battle for screen real estate has driven the most significant design changes in modern mobile computing.

The Era of Compromise: Notches and Cutouts

The transition from 16:9 aspect ratios to taller, immersive screens necessitated the removal of the top bezel. This birthed the “notch,” a design compromise that housed the camera, proximity sensors, and earpiece. While functional, it was aesthetically divisive. The industry quickly pivoted to the “punch-hole” design, which isolated the camera module into a small circle floating within the display matrix.

While the punch-hole is currently the standard for most high-end Android Phones, it represents a technological plateau. It maximizes space but still disrupts the user interface. Notification bars must be taller to accommodate it, and full-screen video content is either cropped or marred by a black dot. The industry knows this is not the endgame.

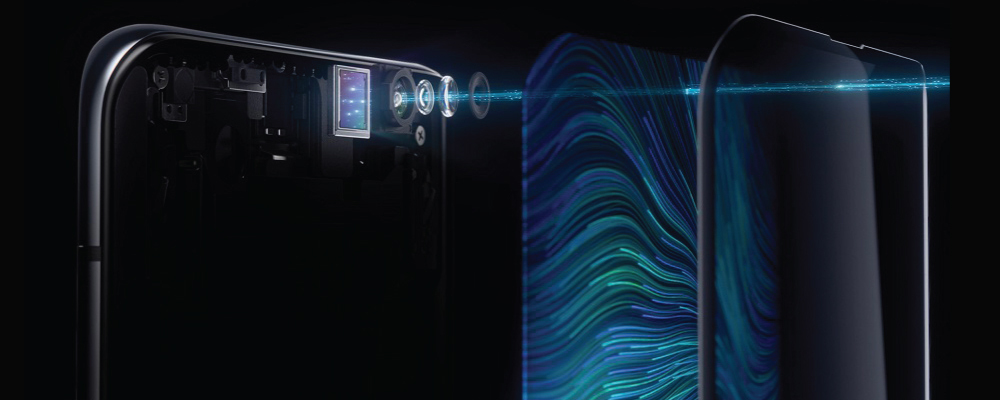

The Shift to Under-Display Technology

The “Shocking Redesign” alluded to in recent hardware discussions refers to the maturation of Under-Display Camera (UDC) technology. Early iterations of this tech—seen primarily in niche gaming phones or experimental foldables—were lackluster. The area of the screen covering the camera often looked like a pixelated screen door, and the photos produced were hazy, suffering from light diffraction.

However, the technology expected in upcoming flagship generations represents a massive leap forward. Manufacturers are reportedly utilizing new cathode materials and transparent pixel structures that allow for high light transmission without sacrificing display density. This means the “patch” over the camera will be indistinguishable from the rest of the screen, and the sensor beneath will finally receive enough light to produce competitive imagery.

Why Now? The Convergence of Hardware and AI

Why are we seeing these rumors solidify now? It is the convergence of two distinct technological timelines. First, OLED panel manufacturing has reached a level of precision where transparent sub-pixel arrangements are viable at mass-production scales. Second, and perhaps more importantly, mobile chipsets now possess the Neural Processing Unit (NPU) power required to fix the physics problems created by shooting through a screen.

Section 2: Technical Deep Dive – How the “Invisible” Works

Creating a camera that sees through a screen is one of the most difficult optical challenges in consumer electronics. It requires a complete rethinking of both the display panel and the image signal processing pipeline.

The Physics of Transparency and Diffraction

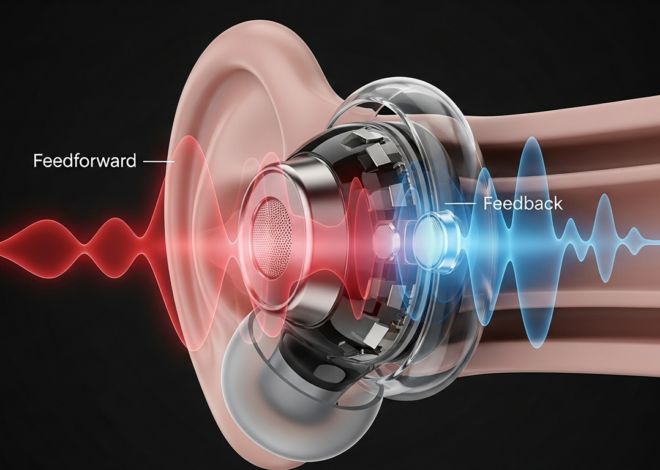

An OLED screen is essentially a dense grid of tiny lights (pixels) and wiring. Placing a camera behind this grid is akin to taking a photo through a window screen. Two main physical issues arise:

- Light Attenuation: The display layer blocks a significant percentage of light, meaning the sensor receives less data, leading to noise and poor low-light performance.

- Diffraction: As light passes through the tiny gaps between pixels, it bends. This causes point light sources (like streetlamps or candles) to streak and reduces overall image sharpness, creating a “foggy” effect.

To combat this, next-gen Android Gadgets are employing “High-Transparency Anodes” and specialized pixel geometries. Instead of the standard diamond pixel layout, the area above the camera may use a specialized arrangement that maximizes the gap size (aperture) between pixels while maintaining enough pixel density to render text and images sharply to the human eye.

Computational Photography: The AI Restoration

Hardware is only half the battle. The true magic lies in the software. When a sensor captures an image through a display, the raw data is flawed. It lacks contrast and suffers from color shifting. This is where the latest Android News cycles highlight the importance of AI.

Modern Image Signal Processors (ISPs) use deep learning algorithms trained on millions of pairs of images (one taken with a clear lens, one taken through a screen). The AI learns to:

- De-haze: Mathematically remove the “fog” caused by the display layer.

- De-noise: Reconstruct detail in shadowed areas where light transmission was low.

- Diffraction Correction: Identify and correct the specific light streaks caused by the pixel grid.

This process happens in milliseconds. The result is that the user sees a crisp, clear selfie, unaware that the raw input looked like a blurry mess. This heavy reliance on computational photography explains why this tech is debuting in “Ultra” tier devices first—they are the only ones with the processing overhead to handle these algorithms in real-time.

Case Study: The Foldable Proving Ground

We have already seen the beta version of this evolution in the foldable sector. The Galaxy Z Fold series, for example, has utilized UDC technology for several generations on its inner screen. While the first iteration was obvious to the eye and produced poor photos, subsequent generations have increased the pixel density over the camera (making it harder to see) and improved the AI processing.

The upcoming shift in traditional “slab” phones indicates that the technology has finally graduated from “experimental” to “flagship standard.” The trade-offs are no longer significant enough to deter the average consumer.

Section 3: Implications for the Android Ecosystem

The move toward invisible sensors is not just about making phones look prettier. It has profound implications for the utility, security, and form factor of future Android Gadgets.

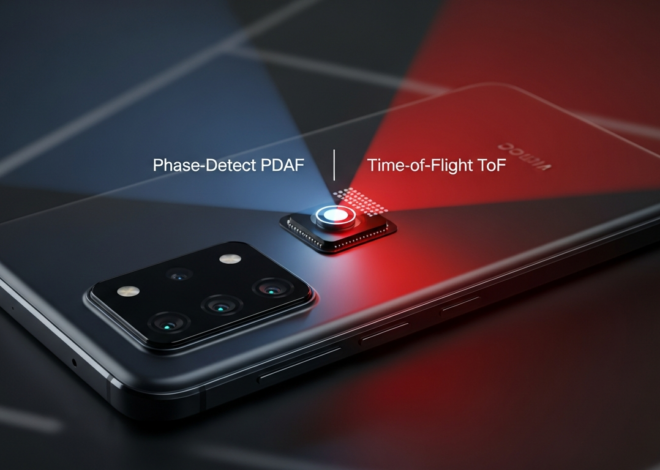

Redefining Biometrics and Security

One of the biggest hurdles for UDC technology has been facial recognition. Secure face unlock (like that used for banking apps) relies on infrared dot projectors and cameras that require clear optical paths. If the selfie camera is hidden, can we still have secure biometrics?

The answer lies in the spectrum of light. OLED panels are often more transparent to Infrared (IR) light than visible light. This suggests that next-gen Android flagships might reintegrate robust face unlock systems beneath the display, eliminating the need for the pill-shaped cutouts seen on competitor devices. This would offer a significant advantage: high-security biometrics without the visual clutter.

Impact on Tablets and Laptops

Innovations in the smartphone space invariably trickle down to other Android Gadgets. Tablets, which are increasingly used for professional video conferencing, stand to benefit immensely. Currently, tablet bezels are kept somewhat thick to house high-quality webcams. By perfecting UDC technology, manufacturers can push tablet designs to be truly edge-to-edge, reducing the physical footprint of a 12-inch or 14-inch device without sacrificing screen size.

Furthermore, as Android integration with Windows and ChromeOS deepens, we may see this technology jump to laptops, finally killing the thick top bezel on ultrabooks.

The “Smart Surface” Future

Looking further ahead, if cameras and sensors can be effectively hidden behind display panels, the concept of a “device” changes. We move toward “Smart Surfaces.” Imagine a smart home hub where the entire front is a screen, but it can still track your movement and recognize your face. Imagine automotive dashboards that are seamless displays from door to door, with driver-monitoring cameras completely invisible to the occupants.

The technology debuting in the next wave of Android Phones is the precursor to an era where hardware sensors disappear into the background, leaving only the user interface.

Section 4: Pros, Cons, and Consumer Recommendations

As we approach the release of devices featuring these radical redesigns, consumers need to weigh the benefits against the potential drawbacks. Is the “perfect” look worth the price of admission?

The Pros

- Total Immersion: Gaming and video consumption become completely uninterrupted experiences. There is no notch cutting into the health bar of a game or the head of an actor in a movie.

- Aesthetic Purity: The device looks futuristic and clean, aligning with the minimalist design philosophy that dominates modern tech.

- Durability: Fewer cutouts in the glass can theoretically improve structural integrity, though this is marginal compared to drop protection.

The Cons

- Image Quality Ceiling: No matter how good the AI is, a camera behind a screen will always be optically inferior to a camera with a clear lens. For casual selfies, it will be fine. For content creators or “vloggers,” it may still be a downgrade.

- Repair Costs: Complex display panels with varying pixel densities and integrated sensor zones are significantly more expensive to manufacture and, consequently, to replace if broken.

- Early Adopter Tax: As with all new tech, the first generation of “perfect” UDC phones will command a premium price.

Best Practices for Buyers

If you are in the market for new Android Gadgets in the coming year, consider your usage patterns:

- The Video Caller: If you take Zoom calls all day for work, wait for reviews. Check specifically for “blooming” around light sources in video feeds, as AI correction is harder to do in real-time video than in still photos.

- The Media Consumer: If your phone is your primary TV, this technology is a dream come true. The immersion factor cannot be overstated.

- The Creator: If you use the front camera for TikTok or Instagram Reels, you might want to stick to standard punch-hole designs for one more generation, or ensure the phone allows you to use the rear cameras with a preview on the front screen (a feature common in foldables).

Conclusion

The rumored redesigns of upcoming Android flagships mark a pivotal moment in mobile history. We are witnessing the death of the bezel and the disappearance of the sensor. While the “shocking” leaks regarding the Galaxy S26 Ultra and its contemporaries serve as the catalyst for this conversation, the implications go far beyond a single phone model.

This evolution represents the triumph of computational photography over physical limitations and signals a new design language for all Android Gadgets. As we move into 2025 and beyond, the definition of a premium device will shift from “what features can we see” to “what features are invisible.” For the consumer, the future is bright, bezel-less, and beautifully unobstructed.