Android’s Next Dimension: Unpacking the Future of Spatial Computing with Google’s XR Push

The Dawn of a New Computing Paradigm: Android Enters the World of XR

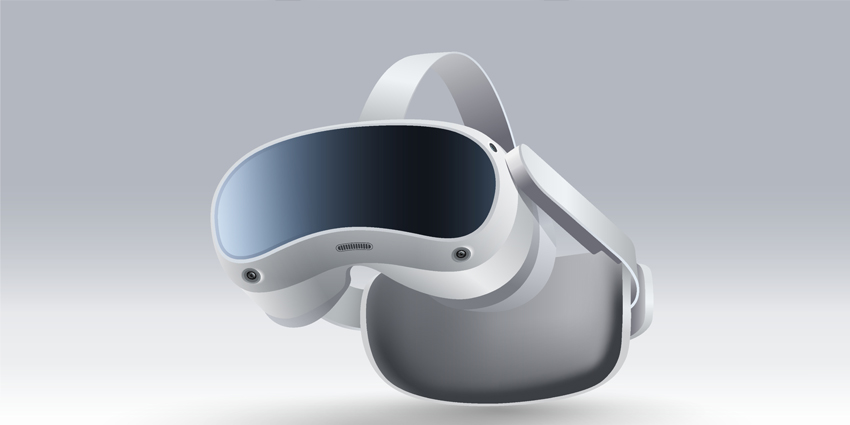

For over a decade, the Android ecosystem has dominated the mobile landscape, powering billions of smartphones and tablets worldwide. However, the next great platform war is not being fought over pocket-sized screens, but over the very fabric of our reality. Extended Reality (XR)—an umbrella term for Augmented, Virtual, and Mixed Reality—is poised to redefine how we interact with digital information. In a landmark development that has sent ripples through the tech industry, Google is making a significant and strategic re-entry into this space, signaling a future where the digital and physical worlds merge seamlessly. This latest push isn’t just another experimental project; it’s a calculated move to build the “Android of XR,” an open, scalable platform designed to power a new generation of spatial computing devices. This initiative aims to leverage Android’s massive developer base and open-source ethos to challenge the closed ecosystems being built by competitors. The implications are profound, suggesting a future where our favorite Android Gadgets are no longer confined to our hands but are integrated directly into our field of view, fundamentally changing everything from communication and entertainment to work and education.

Section 1: Forging the Future: The Vision for an Android-Powered XR Ecosystem

The strategy behind building an Android-based XR platform is a masterclass in leveraging existing strengths to conquer a new frontier. At its core, this initiative is about creating a foundational operating system and hardware reference platform that other manufacturers can adopt and build upon. This is the very playbook that made Android the world’s most popular mobile OS, and Google is betting it can work again for spatial computing.

The Core Components of the Strategy

The collaboration brings together two industry titans, each contributing a critical piece of the puzzle. Google provides the software backbone: a specialized version of Android optimized for the unique demands of XR. This involves re-architecting the OS to handle stereoscopic 3D rendering, low-latency sensor fusion, spatial audio, and novel input methods like hand and eye tracking. Furthermore, Google brings its formidable suite of AI and cloud services—Google Maps for spatial awareness, Google Assistant for voice interaction, and Google Cloud for processing-intensive tasks. On the other side, a partner like Magic Leap offers deep expertise in the incredibly complex hardware required for a compelling AR experience. This includes sophisticated optical systems, such as advanced waveguide displays that project digital content onto the real world with clarity and brightness, and the intricate sensor arrays needed for environmental understanding (SLAM – Simultaneous Localization and Mapping).

Why Android is the Perfect Foundation

Choosing Android as the foundation for this new XR platform is a strategic masterstroke. Here’s why:

- Massive Developer Ecosystem: There are millions of Android developers worldwide. By providing familiar tools like Android Studio, Kotlin, and a dedicated XR SDK, Google can significantly lower the barrier to entry for creating spatial applications. This is a crucial advantage over starting a new OS from scratch.

- Open Source Philosophy: An open platform encourages hardware diversity. Just as we have a wide variety of Android Phones from Samsung, OnePlus, and others, we could see a future with XR glasses from numerous manufacturers, all running the same core OS. This fosters competition, drives down prices, and accelerates innovation.

- Interoperability and Connectivity: The new platform will be deeply integrated with the existing Android ecosystem. Imagine receiving notifications from your phone seamlessly in your glasses, using your watch as a controller, or casting content from your tablet into a large virtual screen in your living room. This interconnectedness is a powerful selling point.

This initiative isn’t just about building a single product; it’s about cultivating an entire ecosystem. The goal is to create a standardized, yet flexible, platform that empowers developers and hardware partners to build the future of computing, moving beyond the latest Android News about phones and into a new dimension of interaction.

Section 2: A Technical Deep Dive: Deconstructing an Android XR Platform

Building a seamless and powerful XR platform requires solving immense technical challenges across both software and hardware. An Android-based system for spatial computing is far more than just a mobile OS strapped to a headset; it’s a fundamental reimagining of the user interface, processing pipeline, and hardware integration.

The Software Stack: Re-Architecting Android for 3D Space

The core of the system is a version of Android heavily modified for spatial computing. This involves several key architectural changes:

- Spatial Compositor: Unlike a phone’s 2D display, an XR device needs a compositor that can manage and render multiple 2D and 3D application windows in a three-dimensional space. This system must handle occlusion (objects blocking other objects), lighting, and shadows in real-time to create a believable experience, all while maintaining an incredibly high frame rate (90Hz or more) to prevent motion sickness.

- Low-Latency Input Pipeline: In XR, the “photon-to-motion” latency—the time between a user’s head movement and the corresponding update on the display—is critical. Any perceptible lag can cause severe discomfort. The Android input pipeline must be re-engineered for microsecond-level precision, directly integrating data from IMUs (Inertial Measurement Units), eye-tracking cameras, and hand-tracking sensors deep within the OS kernel.

- Perception APIs: The platform needs a robust set of APIs that give developers access to the device’s understanding of the world. This includes APIs for plane detection (finding floors, walls, tables), mesh generation (creating a 3D model of the room), and semantic labeling (identifying objects like “chair” or “door”). These APIs are the building blocks for creating truly context-aware AR applications.

The Hardware Challenge: Balancing Power, Optics, and Ergonomics

The software ambitions must be matched by equally sophisticated hardware, a domain where a partnership with an optics specialist becomes invaluable.

- Optics and Display Technology: The “magic” of AR lies in the display. Advanced waveguides are a leading technology. These are essentially tiny, transparent projectors embedded in the lens of the glasses. They take the light from a micro-display (like micro-OLED) and “guide” it across the lens to the user’s eye. The challenges are immense: achieving a wide field of view (FoV), high brightness for outdoor use, and excellent color accuracy, all while keeping the hardware thin and light.

- Custom Silicon (SoC): Off-the-shelf mobile processors are not optimized for XR. A dedicated System-on-a-Chip (SoC), potentially a custom “Tensor XR” chip, would be necessary. This chip would need powerful CPU and GPU cores for rendering, but more importantly, dedicated co-processors or Neural Processing Units (NPUs) to handle the constant stream of sensor data for SLAM, hand tracking, and other AI-driven perception tasks efficiently and with low power consumption.

- Power and Thermal Management: Cramming this much processing power into a small, wearable form factor creates a massive thermal challenge. The device must be able to dissipate heat without becoming uncomfortable to wear. Battery life is the other side of this coin. The entire system, from the OS to the silicon, must be ruthlessly optimized for power efficiency to provide all-day usability, a feat no one has truly mastered yet.

Section 3: The Strategic Battlefield: Implications for the Broader Tech Landscape

Google’s renewed focus on XR is not happening in a vacuum. It is a direct response to a rapidly evolving market and a strategic move to secure a foothold in what many believe is the next major computing platform. This initiative reshapes the competitive landscape and has far-reaching implications for developers, businesses, and consumers.

The Platform Wars Enter a New Dimension

The tech industry is witnessing the opening salvos of the spatial computing platform war, a conflict that mirrors the smartphone wars of the last decade.

- Google vs. Apple: This is the central rivalry. Apple has established a high-end, vertically integrated benchmark with its visionOS and Vision Pro headset. It’s a powerful but closed “walled garden” ecosystem. Google’s strategy is the classic Android counter-play: create an open ecosystem that fosters hardware diversity and developer freedom. This will likely result in a market with a premium, tightly controlled Apple experience on one end, and a broad, varied, and more accessible Android XR ecosystem on the other.

- The Meta Factor: Meta, with its Quest line of headsets, has a significant head start in the consumer VR market. Recently, Meta announced it is opening up its “Horizon OS” to third-party hardware makers like ASUS and Lenovo. This puts Google and Meta in direct competition to become the default open platform for XR, creating a fascinating dynamic where both companies are vying to be the “Windows” or “Android” of the spatial era.

Real-World Applications: From the Factory Floor to the Living Room

The ultimate success of any platform depends on its utility. An Android-powered XR ecosystem has the potential to unlock transformative applications across various sectors.

Case Study: Enterprise and Industrial Use

A company like Boeing could equip its technicians with Android XR glasses for aircraft maintenance. A technician could look at an engine and see a digital overlay of the specific part they need to repair, along with step-by-step instructions and schematics floating in their view. They could initiate a video call with a remote expert who could see exactly what the technician sees and draw annotations in their field of view to guide the repair. This hands-free access to information drastically reduces errors, improves efficiency, and shortens training time. Magic Leap already has a foothold in this space, and a Google-powered platform would supercharge it with seamless cloud integration, robust security, and access to a larger pool of enterprise app developers.

The Consumer Dream:

For consumers, the vision is a world of ambient computing. Imagine navigating an unfamiliar city with arrows overlaid directly on the streets, seeing real-time translation of foreign language signs, or watching a basketball game on a massive virtual screen in your living room while stats and player info appear beside it. Integration with Android Phones would be key, allowing for a fluid transition of tasks and information between devices.

Section 4: Navigating the Future: Best Practices and Potential Hurdles

While the vision for an open XR ecosystem is compelling, the path to mainstream adoption is fraught with challenges. Developers, businesses, and consumers should approach this emerging technology with both excitement and a healthy dose of realism.

Tips and Considerations for Stakeholders

- For Developers: The time to start thinking spatially is now. Begin experimenting with 3D development engines like Unity and Unreal Engine, which will undoubtedly be first-class citizens on the new platform. Focus on creating intuitive user interfaces (UI) and user experiences (UX) for a 3D world. Best practices include minimizing motion-to-photon latency, designing for user comfort to avoid nausea, and creating interactions that feel natural and not gimmicky.

- For Businesses: Look beyond the hype and identify concrete problems within your organization that XR could solve. Start with small pilot programs to test use cases like remote assistance, employee training, or data visualization. A key consideration is data security and employee privacy, which will be paramount for enterprise adoption.

- For Consumers: It’s important to manage expectations. Early devices will likely be expensive, bulky, and have limited battery life. The “killer app” that makes XR glasses a must-have for everyone may still be years away. However, the progress in this space is accelerating rapidly.

Common Pitfalls and Challenges to Overcome

The road ahead is not without significant obstacles. The “Glasshole” effect—the social awkwardness and privacy concerns associated with wearing face-mounted cameras—is a real social barrier that needs to be addressed through thoughtful design and transparent operation. Furthermore, data privacy is a monumental concern. These devices will collect unprecedented amounts of data about users and their environments, and establishing robust privacy controls and clear data policies will be critical for earning public trust. Finally, the technical hurdles of battery life, thermal management, and creating a comfortable, all-day wearable form factor remain the biggest engineering challenges in the industry.

Conclusion: The Next Chapter for Android is in Sight

The announcement of a collaborative, Android-powered XR platform is more than just another piece of tech news; it’s a declaration of intent. Google is leveraging its greatest asset—the open Android ecosystem—to build a foundational layer for the next era of computing. This strategic push aims to democratize spatial computing, fostering a diverse market of hardware and a vibrant community of developers, much like it did for smartphones. While formidable challenges related to hardware, social acceptance, and privacy remain, this initiative injects a powerful dose of competition and innovation into the nascent XR market. The battle for the future of reality is underway, and by betting on an open, collaborative future, Android is positioning itself not just to participate, but to lead the charge into this new, immersive dimension.