The Next Frontier: How Android and On-Device AI Are Powering the XR Revolution

The Dawn of a New Computing Era: Android’s Expansion into Extended Reality

For over a decade, the Android ecosystem has been synonymous with the slab of glass and metal in our pockets. From budget devices to flagship Android phones, the operating system has defined mobile computing for billions. However, the landscape of personal technology is on the brink of a monumental shift. We are moving beyond handheld screens and into a world of spatial computing, a domain collectively known as Extended Reality (XR), which encompasses Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality (MR). The latest Android news isn’t just about foldable screens or faster processors anymore; it’s about the emergence of a new category of Android gadgets designed to overlay the digital world onto our physical one. This new frontier is being pioneered by a powerful trifecta: the flexible and ubiquitous Android OS, highly specialized silicon from chipmakers like Qualcomm, and the transformative intelligence of on-device AI models like Google’s Gemini. This article delves into this convergence, exploring the technology, applications, and implications of the next generation of AI-powered Android XR devices.

The Convergence of Three Pillars: Android, AI, and XR Hardware

The creation of a compelling and seamless XR experience is not the result of a single breakthrough but rather the sophisticated integration of three core technological pillars. Each component plays a critical, symbiotic role in transforming a futuristic concept into a functional and user-friendly product. Understanding how these elements work together is key to appreciating the potential of these new Android gadgets.

The Android Foundation: More Than Just a Mobile OS

At its core, Android’s greatest strength has always been its open-source nature and adaptability. This has allowed manufacturers to tailor the OS for a vast array of form factors beyond phones, including watches (Wear OS), televisions (Android TV), and cars (Android Auto). This history of diversification makes Android the ideal foundation for the nascent XR market. Manufacturers can leverage the mature Android kernel, security frameworks, and development tools, significantly reducing the barrier to entry. Furthermore, the existing ecosystem of millions of Android developers represents a massive talent pool ready to build for this new platform. The challenge, and opportunity, lies in adapting an OS built for 2D touchscreens into a fully realized 3D spatial environment. This involves creating new user interface paradigms, input methods (like hand and eye tracking), and frameworks that allow existing 2D apps to run harmoniously within a 3D space, perhaps as floating, resizable windows.

The Brains of the Operation: Advanced SoCs and On-Device AI

An XR headset is one of the most computationally demanding consumer devices ever conceived. It must simultaneously track the user’s head and hands in 3D space, map the surrounding environment, and render two high-resolution, high-framerate images—one for each eye—all with minimal latency to avoid motion sickness. This is where specialized Systems-on-a-Chip (SoCs), such as Qualcomm’s Snapdragon XR series, become indispensable. These chips are not simply repurposed smartphone processors; they are purpose-built with powerful CPUs, GPUs, and, most importantly, dedicated Neural Processing Units (NPUs). The NPU is the engine for on-device AI. By running sophisticated AI models like Gemini Nano directly on the headset, these devices can perform complex tasks like real-time object recognition, natural language understanding for voice commands, and intelligent context-aware assistance without a constant connection to the cloud. This on-device approach is crucial for privacy, speed, and enabling experiences that feel truly integrated with the user’s reality.

The App Ecosystem: Bridging the Gap Between 2D and 3D

A powerful piece of hardware is nothing without compelling software. The ultimate success of Android-based XR hinges on its ability to build a robust app ecosystem. Initially, this will likely involve a hybrid approach. Users will be able to access the vast library of existing Android apps—from Spotify and Netflix to Slack and Microsoft Word—as 2D panels within their virtual space. This provides immediate utility and familiarity. However, the true potential will be unlocked by native XR applications that are built from the ground up for spatial interaction. Imagine a collaborative design app where users can manipulate 3D models with their hands, or an educational app that lets you conduct a virtual science experiment on your kitchen table. The transition from 2D to 3D development will be a major focus for the industry, and platforms that make this transition easiest for developers will have a significant advantage.

person wearing augmented reality smart glasses – A person wearing augmented reality glasses engages with a …

Anatomy of an AI-Powered XR Headset

To truly grasp the technological leap these new Android gadgets represent, it’s essential to look under the hood. The components inside a modern XR headset are a marvel of miniaturization and specialized engineering, each designed to solve a specific challenge related to creating a believable and comfortable immersive experience.

Core Processing and AI Acceleration

The SoC is the heart of the device. Unlike in Android phones where performance is often measured by app loading times or gaming benchmarks, in XR, the critical metric is “motion-to-photon” latency—the time delay between when you move your head and when the image on the screen updates to reflect that movement. The SoC’s CPU manages the operating system and application logic. The GPU is tasked with the monumental job of rendering complex 3D scenes for two separate displays at 90 frames per second or higher. The NPU, or AI accelerator, is the game-changer. It offloads tasks that are computationally expensive but highly parallelizable, such as interpreting hand gestures from camera feeds, identifying objects in the room for AR interactions, and processing voice commands locally. This division of labor ensures that the GPU is free to focus on rendering, keeping the visual experience smooth and preventing nausea.

Sensors and Spatial Awareness

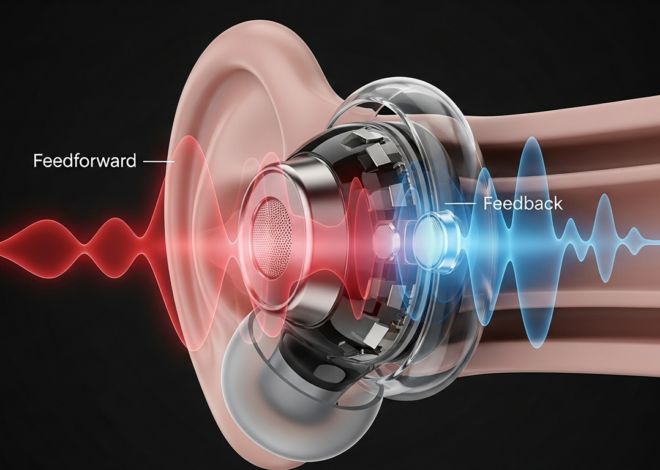

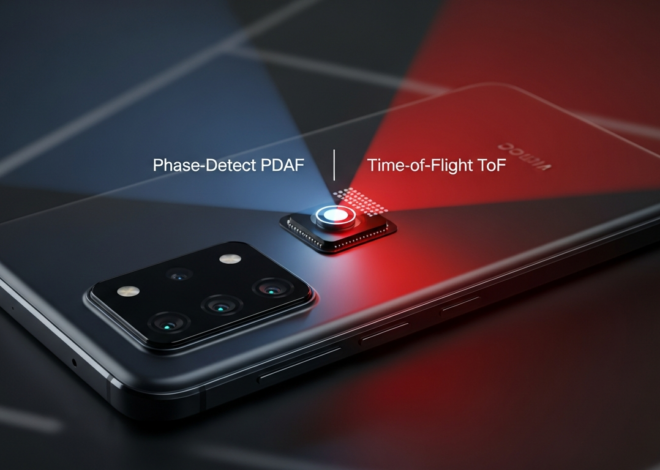

An XR headset is covered in a sophisticated array of sensors that act as its eyes and ears. The most prominent are the multiple grayscale cameras used for “inside-out” tracking. These cameras constantly scan the environment to determine the headset’s position and orientation in 3D space, providing Six Degrees of Freedom (6DoF) without the need for external base stations. Many devices also include high-resolution color cameras for “passthrough,” allowing the user to see the real world in full color, which is the foundation for mixed reality. Inside the headset, eye-tracking sensors monitor where the user is looking. This data is used for a clever optimization technique called foveated rendering, where the device renders the very center of the user’s gaze in full resolution while reducing the detail in their peripheral vision, saving immense computational power. Finally, depth sensors like LiDAR or Time-of-Flight (ToF) create a 3D mesh of the room, allowing virtual objects to realistically interact with physical surfaces, like having a virtual ball bounce off a real table.

Display Technology and User Interface

The visual experience is paramount. Early VR headsets were bulky and produced a “screen-door effect” where the user could see the gaps between pixels. Modern devices are adopting advanced display technologies like Micro-OLED, which offer incredibly high pixel densities, deep blacks, and vibrant colors in a much smaller package. These are paired with innovative “pancake lenses,” which use a folded optical path to drastically reduce the distance needed between the display and the user’s eye. This is the key technology enabling the shift from bulky “ski goggle” designs to more compact, glasses-like form factors. The user interface also undergoes a radical transformation. Instead of tapping on glass, users interact through a combination of precise hand tracking, gaze selection (looking at a button to highlight it), and AI-powered voice commands.

From Niche Gadget to Everyday Tool: Real-World XR Scenarios

The convergence of a mature OS, powerful hardware, and intelligent AI is poised to elevate XR from a niche gaming accessory to a versatile computing platform. The ability to blend digital information with our physical world unlocks use cases across productivity, entertainment, and daily assistance that were previously the stuff of science fiction.

Productivity and Collaboration Reimagined

person wearing augmented reality smart glasses – A futuristic scene showing a person wearing augmented reality …

Consider a team of architects and engineers spread across different continents. With AI-powered XR headsets, they can enter a shared virtual workspace and interact with a full-scale 3D model of a building. They can walk through its halls, make annotations in real-time, and manipulate structural elements with their hands, all while communicating as if they were in the same room. Alongside this 3D model, they can pull up familiar 2D Android apps like Google Sheets with project data or Slack for team communication, creating a truly integrated and boundless digital desktop. The on-device AI could even act as an assistant, instantly pulling up building codes or material specifications based on a simple voice query.

The Gemini Effect: A Context-Aware Spatial Assistant

The integration of a powerful generative AI model like Gemini directly into the operating system transforms the device from a passive content viewer into a proactive assistant. This is where the true revolution lies. Imagine you’re trying to assemble a piece of flat-pack furniture. Instead of deciphering confusing paper instructions, you put on your XR headset. The device’s cameras identify the parts, and the AI overlays a step-by-step 3D animation directly onto your real-world view, showing you exactly which screw goes where. Stuck on a step? Just ask, “What do I do with part C?” and the AI will verbally and visually guide you. This same principle applies to countless scenarios: cooking a new recipe with ingredients and instructions appearing on your countertop, learning to play the piano with virtual keys lighting up, or getting a live AR overlay of walking directions on the city streets in front of you.

Navigating the XR Landscape: Challenges and Opportunities

Despite the immense promise, the path to mainstream adoption for these advanced Android gadgets is not without its obstacles. Both manufacturers and consumers need to be aware of the challenges while developers should focus on the unique opportunities this new platform presents.

Key Hurdles to Mainstream Adoption

Several significant challenges remain. First, battery life is a critical concern; the immense processing required for XR is power-hungry, and a device that dies in two hours has limited utility. Second is ergonomics and comfort. While devices are getting smaller, wearing a computer on your face for extended periods must be a comfortable, lightweight experience. Third is social acceptance. Overcoming the social awkwardness of wearing headsets in public will be a gradual process. Finally, the “killer app” problem persists. While productivity and assistance are strong use cases, a truly must-have application or experience is needed to drive mass-market appeal beyond tech enthusiasts.

Tips for Early Adopters and Developers

For consumers interested in being early adopters, it’s crucial to manage expectations. Look for devices with high-quality passthrough, comfortable ergonomics, and a clear commitment from the manufacturer to build out an app ecosystem. For developers, the key is to think spatially. Simply porting a 2D Android phone app into a floating window is a start, but true innovation will come from creating experiences that could only exist in XR. Focus on intuitive interaction models using hands and voice, and prioritize performance and optimization to ensure a smooth, comfortable user experience.

The Privacy and Data Conundrum

We must also address the profound privacy implications. These devices, by their very nature, are equipped with cameras and sensors that can map our homes, track our eye movements, and listen to our conversations. The emphasis on on-device AI processing is a critical first step in mitigating these risks, as it keeps sensitive data on the device rather than sending it to the cloud. However, manufacturers must be transparent about what data is collected and provide users with robust, easy-to-understand privacy controls. Establishing trust will be paramount for widespread acceptance.

Conclusion: The Next Chapter for Android

The narrative of Android is expanding. The next wave of Android gadgets is set to break free from the confines of the 2D screen, ushering in an era of spatial computing powered by artificial intelligence. The powerful combination of the adaptable Android operating system, purpose-built hardware like Qualcomm’s XR chips, and the contextual intelligence of on-device AI models is not merely an incremental upgrade—it represents a fundamental rethinking of how we interact with digital information. While challenges like battery life, ergonomics, and privacy must be diligently addressed, the trajectory is clear. These devices are evolving from niche curiosities into powerful tools for productivity, creativity, and assistance. The journey is just beginning, but this convergence of technologies is laying the groundwork for the next major computing platform, promising a future where our digital and physical worlds are seamlessly intertwined.