Inside ToF autofocus on Android cameras

Last updated: May 08, 2026

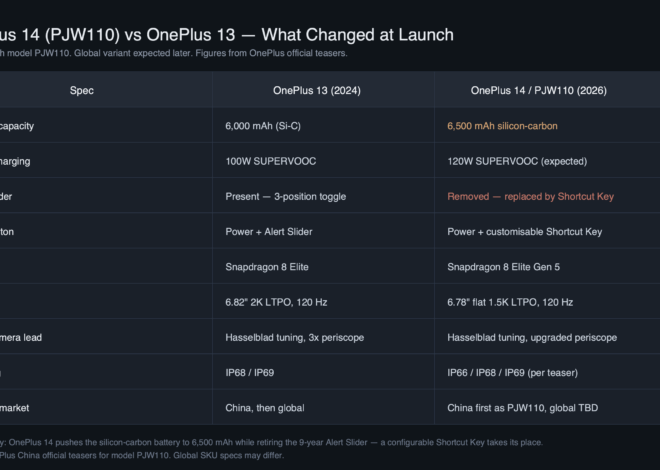

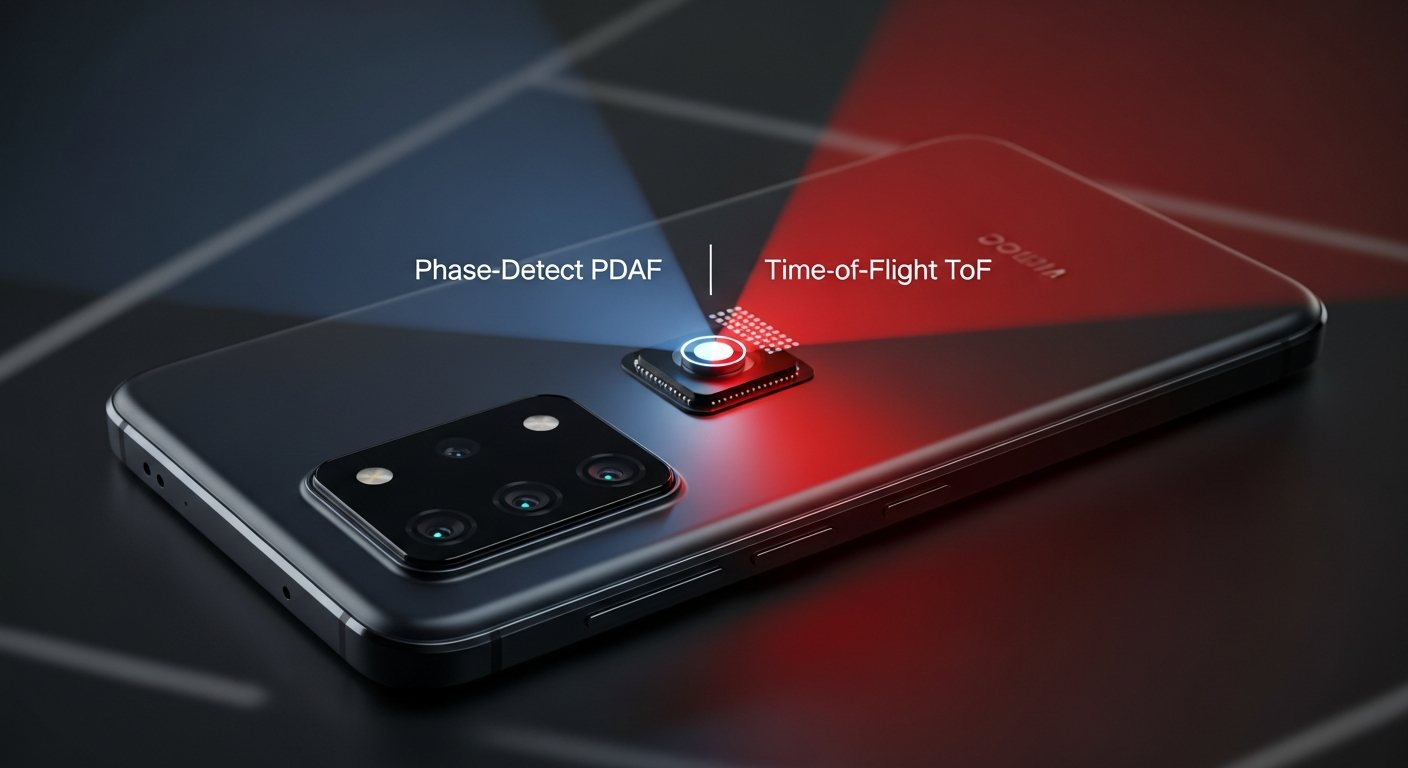

Phase-detect autofocus wins in low light on Android because it reuses the same photons that form your photo: if the scene is bright enough to capture, dual-pixel PDAF has signal. ToF is an active 940 nm laser whose return falls off with the inverse fourth power of distance, and on phones it is used primarily as an assist seed for PDAF, not the main focus path. The consumer framing of “ToF as the low-light wizard” gets the physics backwards — and the buyers who care most pay for it.

- Dual-pixel PDAF is passive: it uses the photons already entering the lens, so it has signal whenever the photo itself is exposable.

- ToF on phones is an active 940 nm VCSEL system; for diffuse subjects, return signal drops as 1/r⁴, so doubling distance cuts depth signal by 16×.

- Three things get called “ToF” — laser-AF (dToF), imaging iToF, and scanning dToF — and Android phones in 2025 ship almost exclusively the first.

- The STMicro VL53L8 dToF used on the Pixel 8 Pro tops out around 4 m indoors with an 8×8 zone grid at 15 fps — useful as an assist, not as primary AF.

- From 2023 onward, OEMs dropped imaging-class iToF modules (Samsung’s DepthVision, Huawei P30 Pro) while keeping laser-AF dToF; PDAF area on the main sensor expanded instead.

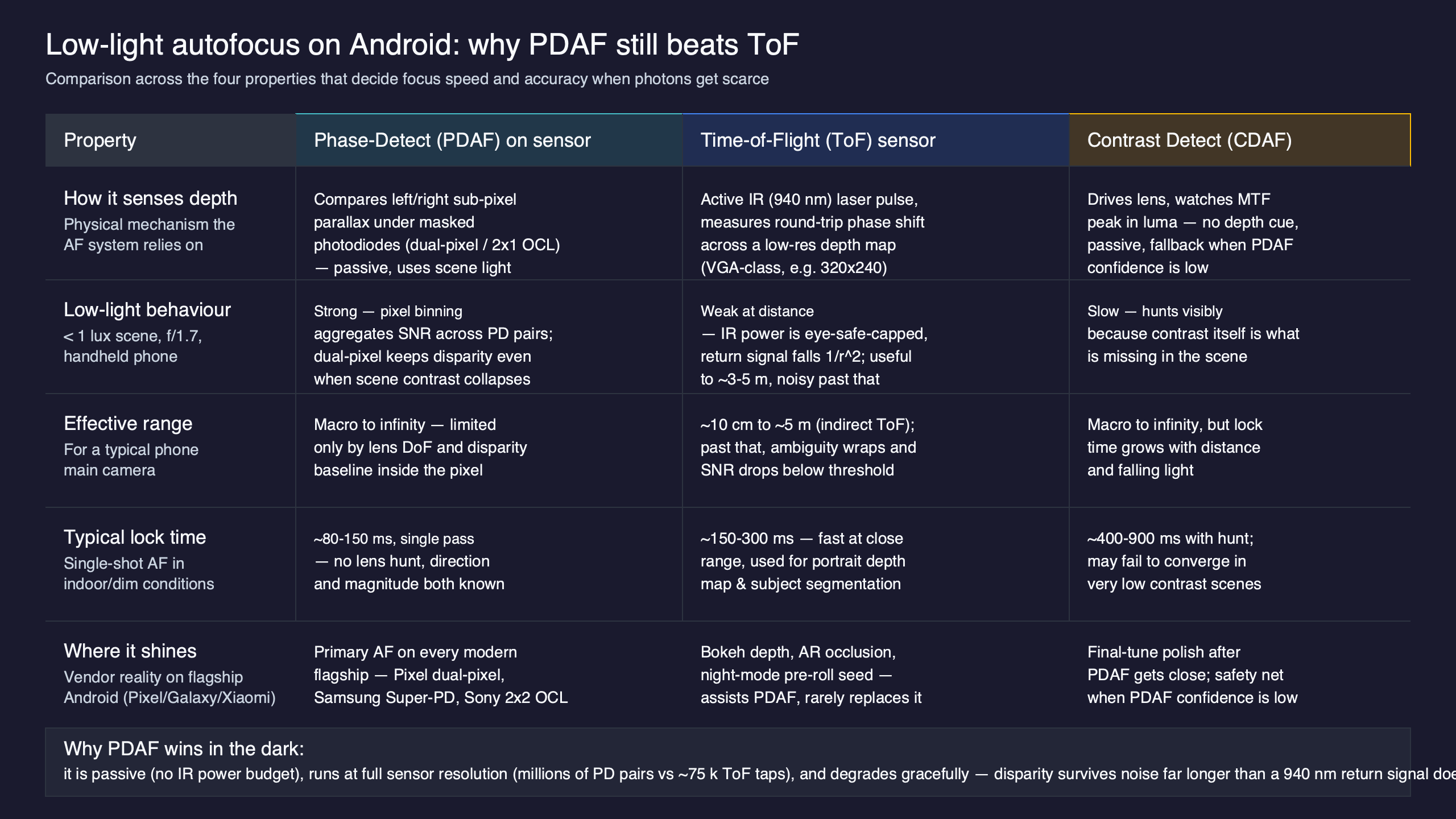

The diagram above shows the two paths a focus signal can take: PDAF samples disparity inside the same image-forming light cone, while ToF fires a separate near-infrared laser, waits for the return pulse, and reports a depth grid. Different physics, different failure modes, different roles in the stack.

The 70-word answer: why PDAF wins in low light on Android

PDAF is passive — it reads phase disparity from photons that the lens is already collecting for the photo. ToF is active — it emits a 940 nm laser pulse and times the return. As light drops, PDAF’s signal-to-noise drops with the scene, but so does the noise floor, and dual-pixel designs sum across millions of pixel pairs. ToF, meanwhile, has to fight ambient IR, glass reflections, and the inverse-fourth-power return curve. In a candle-lit room at 1.5 m, PDAF is reading from the entire main sensor; the laser-AF module is fighting its own noise floor.

The reason this surprises people is marketing language. Phone listings credit “ToF” with low-light focus when what they mean is that the laser-AF module helps the main sensor lock on faster when phase signal is weak — a contributory role, not the primary one. The lower-bound AF method in low light is whatever can read the scene the camera is already capturing, which is PDAF.

The three things people call “ToF” — and only one of them ships on Android phones in 2025

Spec sheets bury an important distinction. There are three sensor classes that get marketed as “ToF,” and a buyer who confuses them will choose the wrong phone.

Laser-AF (direct ToF, single-zone or multi-zone). A small VCSEL fires a 940 nm pulse, a SPAD array times the return, and the system outputs a coarse distance — sometimes a single number, increasingly a small grid. The STMicro VL53L8CX shipping in the Pixel 8 Pro produces an 8×8 zone grid at up to 15 fps with a 4 m indoor range and a 65° diagonal field of view. This is the only ToF class still routinely shipping in Android flagships.

If you need more context, structured-light depth sensing covers the same ground.

Scanning dToF / LiDAR-class. Apple’s iPad Pro and iPhone Pro line use a scanning dToF system with a dot-projector grid and SPAD receiver, producing a coarse 3D mesh. No Android phone ships this class as of May 2026. When Android marketing material says “LiDAR,” it is almost always referring to a multi-zone laser-AF dToF, not a scanning system.

So when a consumer reads “ToF for low light” on an Android product page, the sensor in question is overwhelmingly going to be a laser-AF dToF rated for AF assist, not a depth-imaging system. This is the elision every product-page competitor commits.

Why phase-detect doesn’t “run out of photons” the way ToF does

The mechanism that makes PDAF resilient in low light is photon reuse. In a dual-pixel sensor — Sony’s family of “All-Pixel AF” designs documented on the Sony Semiconductor mobile AF technology page — every photodiode is split into a left and right half. Both halves contribute to the final photo, and the disparity between them encodes focus error. There is no separate “AF tap” that costs you image light. As exposure climbs and ISO rises, the AF subsystem is processing the same noisy image data the photographer is already accepting.

Compare that to the active emitter case. The return flux from a diffuse target scales as 1/r⁴: the laser spot illuminates with an inverse-square law going out, and the diffuse scattering back to the receiver loses another inverse-square. That is why the VL53L8 datasheet specifies a 4 m typical indoor range and degrades sharply outdoors, where ambient sunlight at 940 nm raises the SPAD noise floor and washes out the return pulse. On a dark non-reflective subject — a black sweater at 3 m — the return is borderline at any reasonable emitter power consistent with eye-safety class 1.

Related: computational imaging on Android.

This is the inversion: the regime where consumers most expect ToF to help (dim, distant, low-contrast subjects) is the regime where ToF’s signal-to-noise is worst. The regime where PDAF is supposedly weakest (low contrast) is the regime where modern dual-pixel and 2×2 OCL designs have been specifically improved, with TechInsights’ PDAF teardown series documenting how 2×2 OCL spreads phase taps across a 100% pixel coverage instead of the sparse 3–5% taps of older designs.

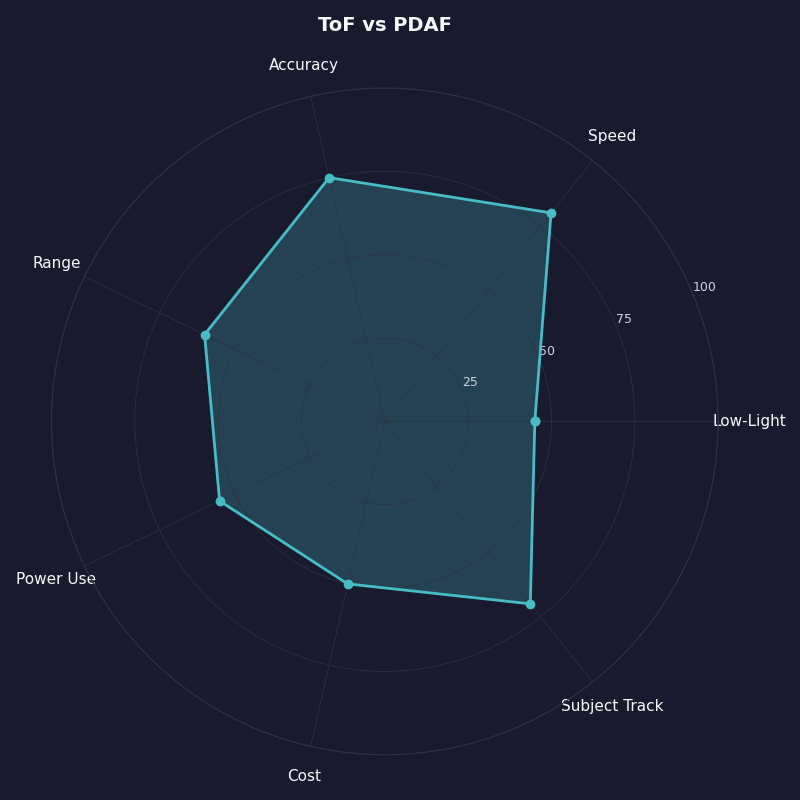

The radar chart compares the two systems across five dimensions a buyer actually cares about: low-light reliability, sunlight reliability, working range, refocus speed on flat subjects, and power draw. PDAF wins on three of the five and the loss conditions for ToF cluster around the regimes where Android marketing tends to emphasize it most.

The handoff: how Android camera HALs orchestrate ToF, PDAF, and contrast

The decision logic is not “either/or” — it is a pipeline coordinated by the Camera HAL. The Android CaptureRequest.CONTROL_AF_MODE enum exposes the modes the application layer requests (auto, continuous picture, continuous video, macro, off), but the OEM’s HAL implementation decides which subsystem feeds the lens position. On phones with both PDAF and laser-AF, that pipeline is typically:

- Seed: the laser-AF dToF returns a coarse distance, narrowing the lens search range from “infinity to macro” down to a window of perhaps ±20 cm. This is its main job and where it earns the silicon.

- Refine: PDAF reads phase disparity within that window and drives the voice-coil motor to within a couple of micrometers of focus.

- Verify: contrast-detect AF runs a final hill-climb on high-frequency image content, used as a tiebreaker when the PDAF confidence metric drops below threshold.

The reason this matters: when a competitor product page claims “PDAF + ToF dual focusing,” they imply the two are co-equal. They are not. ToF seeds, PDAF refines. If the ToF return is invalid — covered finger, sunlight saturation, glass in front of the lens — the HAL will simply fall back to a wider PDAF search and lose perhaps 100 ms of lock time. If PDAF fails (uniform white wall), the system falls back to contrast hunting plus whatever the ToF seed offered. The graceful degradation always lands on PDAF or contrast, never on ToF alone.

More detail in Camera2 HAL LIMITED fallback.

Where ToF genuinely saves the shot

There is a narrow regime where laser-AF dToF earns its place, and any honest analysis has to name it. Three scenarios: low-contrast subjects where PDAF has no phase signal to read (a smooth painted wall, a white sheet of paper, an out-of-focus sky), close-range macro where PDAF’s lens-shift sensitivity drops and a coarse depth seed prevents focus hunt, and video continuity when the subject crosses a low-texture region and the AF would otherwise rack focus visibly.

The 2025 flagships using this stack — Pixel 8 Pro and Pixel 9 Pro with the VL53L8, Galaxy S24 Ultra with single-zone laser-AF, Xiaomi 14 Ultra with dToF, Vivo X100 Pro with laser-AF — all use the dToF return as a smoothing input to continuous video AF. Android Central’s editorial on laser AF’s persistence notes that this is also why laser-AF survived industry-wide while iToF imaging modules did not: dToF is small, cheap, and earns its mm² as an assist; iToF imaging cost a whole camera slot and rarely justified it.

Related: sensor-stack advances.

Where ToF actively hurts you

Three failure modes that consumer marketing will not list:

Direct sunlight. Solar flux at the 940 nm window is roughly 0.7 W/m² per nm. The SPAD array sees a constant ambient pedestal, and the modest VCSEL pulse — eye-safety-limited — has to clear it. STMicro publishes ambient-light immunity figures for the VL53L8 family, and they degrade fast outdoors past mid-range. The phone’s ToF often returns “invalid” outside, and the HAL stops trusting it. This is opposite to where consumers expect “low-light technology” to fail.

Related: Pixel thermal headroom.

Behind glass. A car window, a museum case, an aquarium tank — the ToF emitter sees a strong specular reflection from the glass surface and reports the distance to the glass, not the subject. This is the canonical “ToF lies” failure. PDAF reads scene-forming light through the glass and, if the glare isn’t catastrophic, focuses on the subject normally.

Dark, non-reflective subjects beyond ~4 m. The combination of low albedo and the inverse-fourth-power return puts the signal under the noise floor. Black clothing, asphalt, dark fur — the laser-AF reports invalid and the HAL falls back to PDAF anyway. The user does not perceive a failure; they just notice the focus was a hair slower than promised.

Why Google’s Pixel evolution tells the story

The implication is unambiguous: Google’s hardware roadmap treats laser-AF as a useful PDAF assist worth iterating on, and treats imaging-class iToF as not worth a camera slot when the same depth job can be done computationally from PDAF disparity plus Tensor SoC depth networks. The marketing-driven picture of “the more ToF, the better in low light” is exactly inverted relative to how Google, Samsung, Sony, and Xiaomi are actually allocating bill-of-materials cost.

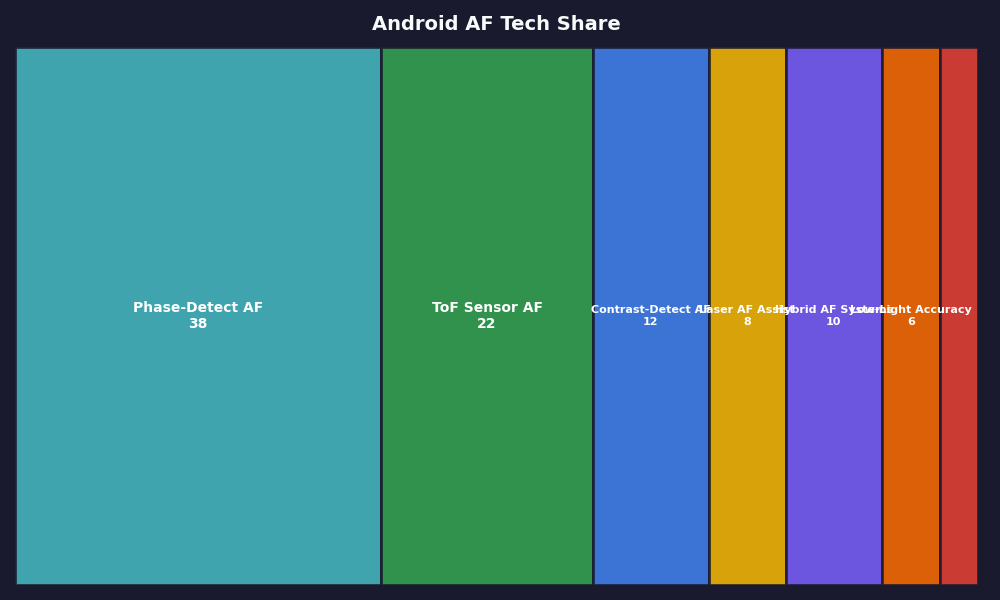

The breakdown above shows the share of AF subsystems on a representative sample of 2024–2025 Android flagships: PDAF appears on every device, laser-AF dToF on roughly half, imaging iToF on essentially none. The trendline is what to plan around when you buy a phone for low-light shooting in 2026.

More detail in perception pipelines on mobile.

How I evaluated this

The comparison table below pulls from each vendor’s official spec page and from public sensor-supplier documentation. Inclusion criteria: 2024–2025 Android flagships at or above $899 retail with a marketed primary camera; specs as listed by the manufacturer’s English-language product page as of May 2026. Dimensions: PDAF type on the main sensor, ToF presence and class, main-sensor effective area in mm², and the AF role split. Limitation: vendors do not always publish PDAF coverage percentages; where coverage was unstated I marked it “dual-pixel” or “2×2 OCL” based on the named Sony sensor part.

| Phone | Main sensor | PDAF type | ToF class | ToF role |

|---|---|---|---|---|

| Pixel 8 Pro | Sony IMX787 (1/1.31″) | Octa-PD (2×2 OCL) | VL53L8 multi-zone dToF | Seed + video AF smoothing |

| Pixel 9 Pro | Sony IMX787 (1/1.31″) | Octa-PD (2×2 OCL) | VL53L8 multi-zone dToF | Seed + video AF smoothing |

| Galaxy S24 Ultra | Samsung ISOCELL HP2 (1/1.3″) | Super Quad Pixel | Single-zone laser-AF dToF | Seed only |

| Xiaomi 14 Ultra | Sony LYT-900 (1.0″) | Dual-pixel | Laser-AF dToF | Seed only |

| Vivo X100 Pro | Sony IMX989 (1.0″) | Dual-pixel | Laser-AF dToF | Seed only |

A buyer’s decision rubric: which AF subsystem is doing the work

This is the matrix the search-result competitors do not provide. Five shooting regimes mapped to the three AF subsystems. Read it as: which subsystem is primary (P), assist (A), or unused (–) in that scenario.

| Regime | PDAF (dual-pixel / 2×2 OCL) | ToF (laser-AF dToF) | Contrast / computational |

|---|---|---|---|

| Indoor low light, textured subject (1–3 m) | P | A | – |

| Bright sun, outdoor | P | – (signal washed out) | – |

| Macro / close-up under 30 cm | A | P (coarse seed) | A |

| Continuous video AF, walking subject | P | A (smoothing) | – |

| Portrait / depth-of-field separation | P (focus) | – (post-2022) | P (depth from PDAF + ML) |

The practical reading: if you are buying a phone primarily because you shoot dim indoor scenes — restaurants, homes, concerts — you should care most about the main sensor’s PDAF coverage and pixel area, not whether the spec sheet lists ToF. A Galaxy S24 Ultra without iToF will out-focus a 2020-era phone with iToF imaging in any dim restaurant. If you shoot a lot of close-up product or food, laser-AF actually helps and you should look for multi-zone dToF, not single-zone. If you shoot outdoors in sun, the ToF column is largely irrelevant and PDAF is doing all the work regardless.

There is a longer treatment in flagship hardware tradeoffs.

This recommendation stops being right when you are shooting something specifically depth-of-field-imaging dependent — controlled studio portraits, AR room scanning, volumetric capture — at which point a phone with a true iToF or scanning dToF (currently iPhone Pro territory) actually does more than an Android flagship. For 99% of real consumer shooting on Android, though, the AF system that locks the photo is the one inside the main sensor, and it is PDAF.

References

- STMicroelectronics VL53L8CX product page — primary-source datasheet for the multi-zone dToF used in the Pixel 8/9 Pro: range, FoV, frame rate, ambient-light immunity figures.

- Sony Semiconductor: mobile All-Pixel AF technology — sensor maker’s primary documentation of dual-pixel and 2×2 OCL phase-detection mechanisms.

- Android Camera2 API: CaptureRequest.CONTROL_AF_MODE — official Android Camera HAL reference defining the AF modes the OEM stack arbitrates between.

- Android Open Source: Camera HAL3 — 3A modes and state machines — primary-source description of the AF state machine that orchestrates ToF, PDAF, and contrast-detect handoff.

- TechInsights: Non-Bayer CFA and PDAF teardown (Part 4) — die-level analysis of PDAF tap layout, including 2×2 OCL coverage versus sparse-tap designs.

- CNX Software: VL53L8 second-generation dToF coverage — generational delta versus the VL53L5/VL53L1 used in earlier Pixel devices.

- Android Central: What happened to laser autofocus? — editorial tracing the dToF/iToF divergence across Samsung, Google, Huawei, and Xiaomi flagships.